Format:

Cartoons,

Language/s:

English,

Resource Link: View the Cartoon

Target Audience:

Self-directed learning |

Short Description:

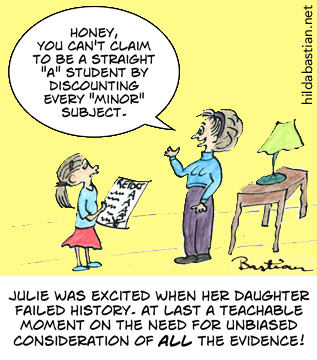

Cherry picking results can be misleading.

Key Concepts addressed:

Details

Unfortunately, little Suzy isn’t the only one falling for the temptation to dismiss or explain away inconvenient performance data. Healthcare is riddled with this, as people pick and choose studies that are easy to find or that prove their points.

In fact, most reviews of healthcare evidence don’t go through the painstaking processes needed to systematically minimize bias and show a fair picture. You can read more about how it’s done thoroughly in this explanation of systematic reviews at PubMed Health .

A fully systematic review very specifically lays out a question and how it’s going to be answered. Then the researchers stick to that study plan, no matter how welcome or unwelcome the results. They go to great lengths to find the studies that have looked at their question, and they analyze the quality and meaning of what they find.

The researchers might do a meta-analysis – a statistical technique to combine the results of studies (explained here at Statistically Funny ). But you can have a systematic review without a meta-analysis – and you can do a meta-analysis of a group of studies without doing a systematic review.

To help make it easier for people to sift out the fully systematic from the less thorough reviews, a group of us, led by Elaine Beller , have just published guidelines for abstracts of systematic reviews . It’s part of the PRISMA Statement initiative to improve reporting of systematic reviews.

A quick way to find systematic reviews is the National Library of Medicine’s PubMed Health . It’s a one-stop shop of systematic reviews, information based on systematic reviews and key resources to help you understand clinical effectiveness research . You can read more about PubMed Health here .

Do systematic reviews entirely solve the problem Julie saw with those school grades? Unfortunately, not always. Many trials aren’t even published at all, and no amount of searching or digging can get to them. This happens even when the trial has good news , but it happens more often with disappointing results. The “fails” can be very well-hidden . Yes, it’s as bad as it sounds: Ben Goldacre explains the problem and its consequences here .

You can help by signing up to the All Trials campaign – please do, and encourage everyone you know to do it too. Healthcare interventions simply won’t all be able to have reliable report cards until the trials are not just done, but easy to get at.

Text reproduced from http://statistically-funny.blogspot.co.uk/ . Cartoons and text copyright Hilda Bastian, usable under Creative Commons non-commercial license, CC BY-NC-ND 4.0.

Browse Key Concepts

Back to Library

Jargon buster

Select a term acceptability

adherence

adverse effect

adverse event

allocation

allocation bias

allocation schedule

allocation schedule concealment

applicability

association

attrition bias

average

average difference

baseline characteristics

before-after study

benefit

bias

blinding

burden

case report

case series

case-control study

causal association

certainty of the evidence

change in cost

cluster

cluster randomized study

cohort study

comparative study

comparing like with like

confidence interval

confidence region

confirmation bias

conflicts of interests

confounders

contamination

controlled before-after study

controlled study

cost

cost-effectiveness

critical assessment

cross-sectional study

crossover study

cut-off value

data collection

data fishing

diagnosis

diagnostic algorithm

diagnostic odds ratio

diagnostic test

diagnostic test accuracy

difference

direct comparison

disease progression bias

disease stage

disease status

double blinding

double dummy

dramatic treatment effect

drug

effect estimate

effectiveness

efficiency

eligibility criteria

enrolment

estimate

evidence

evidence profile

evidence to decision framework

explanatory trial

exploratory analysis

extrapolated evidence

factorial study

fair comparisons of treatments

false negative test result

false negative test result (duplicate)

false positive test result

false positive test result (duplicate)

follow-up

forest plot

GRADE

guideline

high certainty of the evidence

important

imprecision

incidence

inconsistency

incremental cost-effectiveness ratio

indeterminate diagnostic test result

index test

indicator

indirect comparison

indirectness

informed consent

intention-to-treat analysis

interim analysis

interrupted time series study

lead-time bias

length-time bias

level of evidence

likelihood

likelihood ratio

loss to follow-up

low certainty of the evidence

low risk of bias

measurement bias

meta-analysis

minimization

moderate certainty of the evidence

modified intention-to-treat analysis

monitoring

multicentre study

multiple statistical comparisons

natural course of health problems

negative predictive value

nocebo effect

non-random allocation

non-randomized study

number needed to harm

number needed to screen

number needed to treat

objective outcome

odds

odds ratio

outcome

outcome measured on a scale

overdiagnosis

overtreatment

p-value

paired study design for diagnostic tests

parallel group study

peer review

performance bias

perspective

phase 1 trial

phase 2 trial

phase 3 trial

phase 4 trial

PICO

placebo

placebo effect

planned analysis

play of chance

positive predictive value

pragmatic trail

pre-test probability

precision

prevalence

primary outcome

prognosis

prognostic variable

protocol or study plan

qualitative study

quality-adjusted life years

quantitative study

random

random allocation

randomized study

reference standard test

regulation of research

relative effect

reliability

repeated measures study

reporting bias

reproducibility

research

research data

research evidence

research methods

research priorities

resource use

risk of bias

risk ratio

sample

sample size

scale

screening

screening test

secondary outcome

selection criteria

sensitivity

shared decision making

single blinding

single participant trial

smallest important difference

specificity

spin

sponsor bias

statistical power

statistically significant

stratified randomization

strength of recommendation

study

study participants

study population

subgroup

subgroup analysis

summary of findings

surrogate outcome

systematic review

target condition

theory

time horizon

treatment

treatment comparison

treatment comparison group

treatment effect

treatment effect

trial phases

triple blinding

true negative test result

true positive test result

type of study

uncertainty

under-reporting

undesirable effect

unfairness

unit of analysis error

utility value

value

variables

very low certainty of the evidence

yes/no outcomes

About GET-IT

GET-IT provides plain language definitions of health research terms